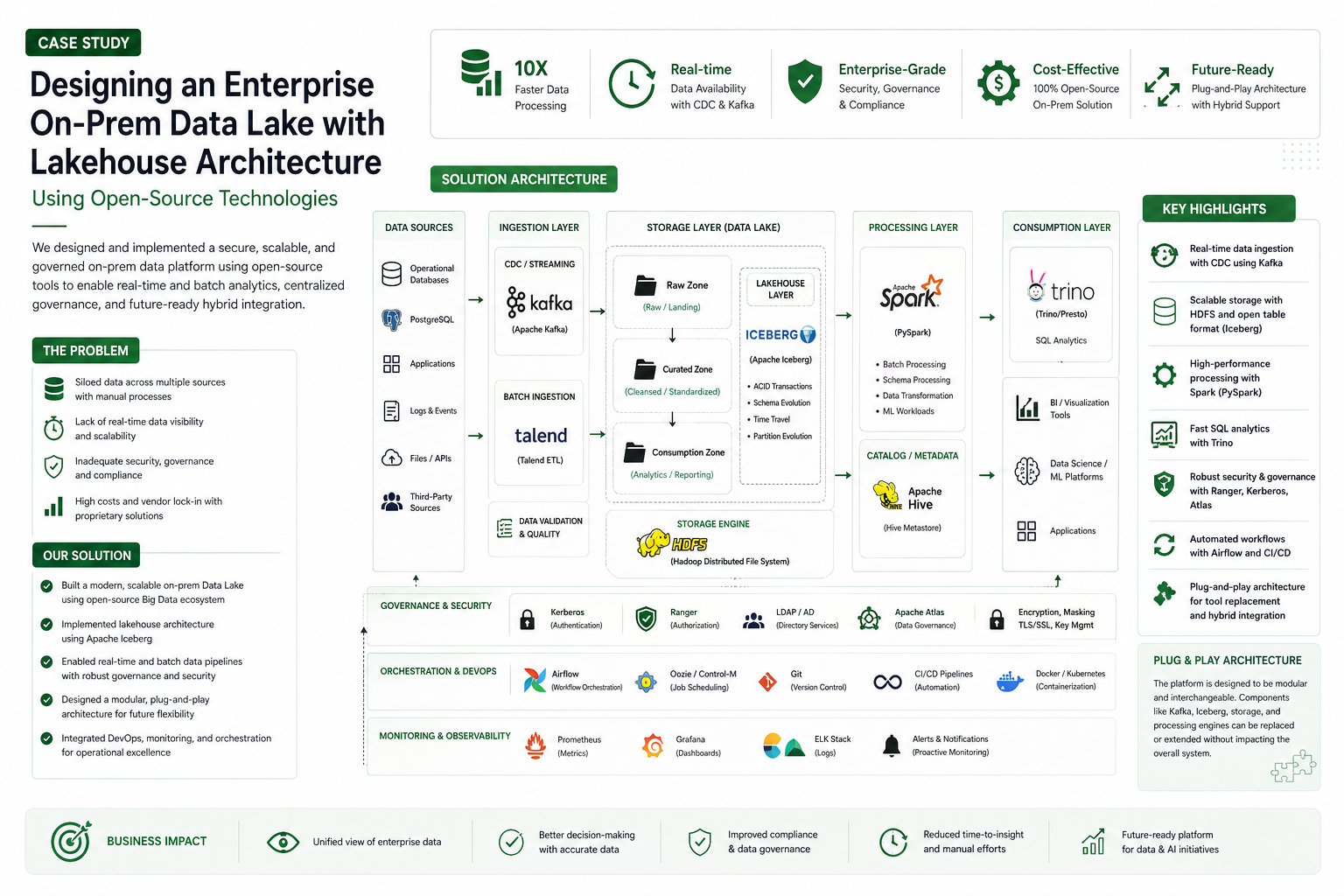

This case study highlights how CogFocus designed and implemented an enterprise-grade on-premises Data Lake platform using open-source Big Data technologies. The solution enabled scalable data ingestion, processing, governance, and analytics while ensuring security, compliance, and high availability. The architecture was built using a lakehouse approach with tools like Apache Kafka, Spark, Iceberg, and Trino, while maintaining a plug-and-play design to support future tool replacement and hybrid cloud integration.

The client required a fully on-premises enterprise Data Lake platform to handle large-scale structured and unstructured data while meeting strict security, governance, and compliance requirements. Existing systems lacked scalability, real-time processing capabilities, and centralized data governance. CogFocus designed an end-to-end data platform architecture covering ingestion, storage, processing, and consumption layers using proven open-source technologies. Data ingestion was implemented using change data capture (CDC) and streaming pipelines with Apache Kafka to enable real-time data flow from transactional systems such as PostgreSQL and other enterprise sources. For storage, a distributed data lake was built on HDFS, with a lakehouse layer implemented using Apache Iceberg to support schema evolution, ACID transactions, and efficient large-scale analytics. Data processing was handled using Apache Spark (PySpark) for both batch and near real-time workloads, ensuring high-performance distributed computation. Hive metastore and catalog services were used for metadata management, while Trino was integrated to provide fast, interactive SQL-based querying across large datasets. ETL workflows were orchestrated using tools like Apache Airflow and Talend, enabling dependency management, retries, and scheduling across complex pipelines. Security and governance were a key focus, with Kerberos-based authentication, Ranger for fine-grained access control, LDAP/Active Directory integration, and data encryption mechanisms implemented to ensure compliance with enterprise standards. Metadata governance was supported using tools like Apache Atlas to provide data lineage and traceability. Monitoring and observability were achieved using Prometheus, Grafana, and the ELK stack, enabling proactive issue detection and performance tuning. The entire platform was designed with a modular, plug-and-play architecture, allowing components such as Kafka, Iceberg, or processing engines to be replaced or extended without major redesign. Additionally, the system was built to support hybrid integration with cloud platforms when required, enabling future scalability and flexibility. As a result, the client achieved a secure, scalable, and high-performance data platform capable of handling real-time and batch workloads, improving data accessibility, governance, and operational efficiency across the organization.